Last Updated on March 2, 2026

Private AI for Word represents the next frontier in data ownership, allowing users to experience a taste of general intelligence directly within their document creation. According to Deep Cogito’s blog, the history of AI breakthroughs—from AlphaGo to the latest reasoning engines—proves that superhuman performance is born from two key ingredients: Advanced Reasoning and Iterative Self-Improvement. Advanced Reasoning allows a system to derive significantly improved solutions simply by increasing its computational “thinking time,” while Iterative Self-Improvement allows it to refine its own intelligence without being strictly bounded by the limitations of a human overseer.

Until recently, most LLMs were trapped by two factors: (1) smaller models could never outsmart the larger ones they were distilled from, and (2) the largest models remained constrained by the human-curated data used to train them. Cogito-v1-preview-qwen-32 breaks this paradigm using Iterated Distillation and Amplification (IDA). Deep Cogito presents an initial approach toward overcoming these constraints via iterative self-improvement in LLMs, integrated with advanced reasoning.

By running LocPilot as a local Add-in, you can now deploy this powerful model locally to enable your Private AI for Word. This direction is at the core of our Local LLM Benchmarks for Microsoft Word, where we explore the move toward 100% data security on your intranet.

To see it in action, watch our quick demo video. The demo is powered by GPTLocalhost, which offers the same core features for individual use. LocPilot in Word is the Intranet edition of GPTLocalhost designed for enterprise users and team collaboration. For a quick demo of LocPilot, please click here.

For more creative uses of local and private LLMs in Microsoft Word, explore additional demos available on our channel at @LocPilot.

Technical Profile: Why Cogito-32B for Word? (Download Size: 19.85 GB)

A few months after our test, Cogito released their v2.1 models. According to the latest research from Deep Cogito, “the new models are trained via process supervision for the reasoning chains. As a result, the model develops a stronger intuition for the right search trajectory during the reasoning process, and does not need long reasoning chains to arrive at the correct answer.”

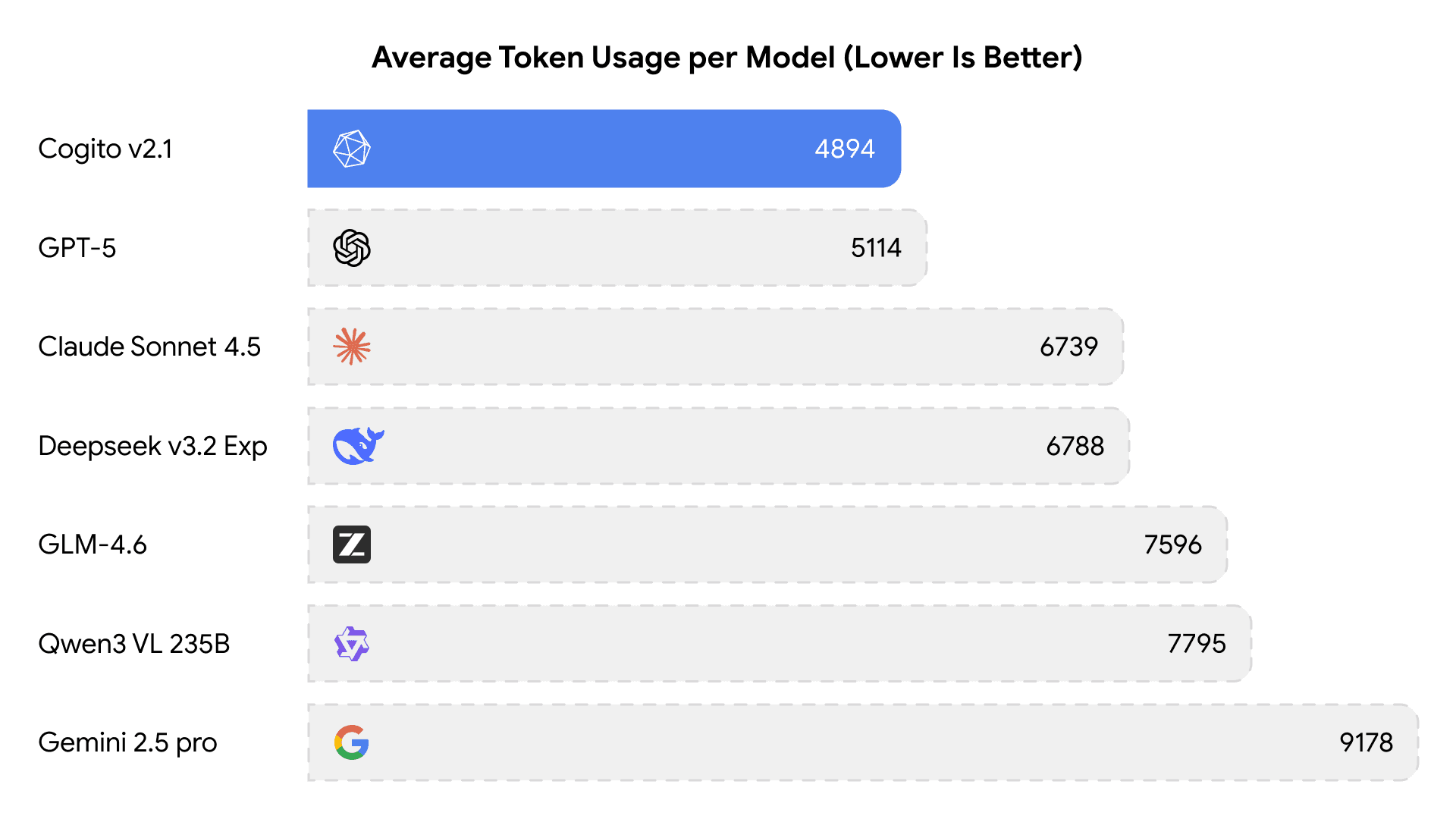

In fact, Cogito v2.1 currently holds the record for the lowest average tokens used with respect to reasoning models of similar capabilities as below.

For users in Private AI for Word, this translates to faster response times and lower hardware overhead without compromising on logical depth. Given this breakthrough in efficiency, this series is a potential addition to local toolkit, offering enterprise-grade reasoning that fits comfortably within a local environment.

Deployment Reminders: Running Cogito-32B Locally

Our primary testing was conducted on an M1 Max (64 GB), which is sufficient. To run the Cogito-32B language model locally, the primary hardware requirement is sufficient VRAM (Video RAM) or unified memory, as the model size in its raw format exceeds typical consumer-grade memory capacities. The specific requirement depends heavily on the quantization level used.

- Minimum (Quantized): At least 16 GB to 24 GB for quantized versions (e.g., 4-bit or Q4_K_M), which allows it to run on high-end consumer GPUs like an NVIDIA RTX 3090/4090 (24 GB) or Apple Silicon Macs with 32 GB or more of unified memory.

- System RAM: A minimum of 16 GB of system RAM is recommended, with 32 GB+ for smoother operation, especially if offloading some layers to system RAM.

The Local Advantage

Running your LLM models locally via LocPilot ensures:

- Air-Gapped Security: Operate entirely within your intranet — no external connections.

- Cost Savings: Eliminate subscription fees for the entire team — no ongoing costs.

- Model Flexibility: Easily host and switch models to suit your use cases — no vendor lock-in.